Embarrassingly Solved Problems

Do we even have language for the enormous and growing corpus of problems that are 'easy' for entities which may not think?

There’s nothing positive you can say about AI lately. It’s killing the creative fields. It’s overwhelming the internet with slop in a way that may prove irreversible and damaging to both discourse and future generations of AI. It may foreclose traditional hiring pipelines and turn technology, heretofore at least not as openly obsessed with pedigree as, say, law, into a members-only club where careers are built only by connections and word-of-mouth because of the avalanche of sloplications with which companies are inundated.1 It uses a lot of energy. Supposedly, it uses a lot of water. Nobody wants the data centers. It may kill us all. Whether you view AI development as a race to secure the final victory of the bourgeoisie over the proletariat or a desperate fight to free ourselves from our third-to-last2 enemy, all can agree this is a touchy subject.

Were I in the trenches in an AI lab in San Francisco,3 I would receive this term, which I’ve recently tried to meme into existence over a few recent HackerNews posts, with a certain degree of ire. One engineer posted one of my messages4 to X (formerly Twitter), with the caption “orange site has coined ‘embarrassingly solved problems’ and ‘license washing’ to describe LLMs”.5 I understand. I admit, when I say “AI is great at solving embarrassingly solved problems”, that sounds suspiciously close to saying “AI is a stochastic parrot”,6 but it’s not a knock on AI. It’s a recognition that much of human work is fundamentally redundant and uncreative, and to such a degree that it’s easy for a machine to generalize from its training data a solution to any problem for which you might be tempted to name your version of the project “Yet Another…”.

Since this term may break containment, and I check because I’m vain, I thought it might be a good idea to write down what it means if possible and what it doesn’t if necessary.

The tension underlying “AI is a stochastic parrot” is whether LLMs can think in any meaningful way.7 But my term need not resolve this question to be useful. Embarrassingly solved problems are about the problem; the nature of the entity solving that problem doesn’t matter. Take a trivial derivative: any function of the form xn where n is a real number.

Why do we think it’s easy? What makes it trivial? When we were children and had never been exposed to such a problem before, we had no hope of solving it. Then we had seen it but could not generalize the solution. Then there was a cascading moment after which we knew, forever, that for any problem of this form, the answer is nxn-1 for all n. It’s not just that you “know” the power rule, it’s that you can feel it.

A human is tempted to call anything they know well trivial, and anything they don’t know impossible, and when we finally experience the moment that problems transition from known to felt, we say they “clicked” for us. We become experts. How does this generalize? What makes a problem trivial to a human is experience, and what makes a problem trivial to humanity is consensus. It is trivial because a majority of experts in the problem’s domain think that it is. The human referent decides, even if the problem and its solution may have existed outside of us.8

LLMs pose a serious conceptual challenge. They too can generalize from what they have seen.9 Prompted correctly, they are eerily skilled at adapting foreign solutions to new codebases with all the right in-house styles. We now need to be able to describe a problem as ‘trivial’ apart from any human referent, and we need to explain why those problems are easy for LLMs. All available vocabulary assumes an intelligent referent, but the ontological status of LLMs is unresolved. We can’t say they’re experts in the way that implies cognition, and we’re stuck because we can’t deny their abilities either. They may or may not be able to decide a problem is easy or hard at all. What does it mean for a problem to be ‘trivial’, not to, but for10, an LLM?

What was programming? For a majority of people the majority of the time, the job of a professional engineer was largely to recognize when one’s problem had already been solved. If not the exact same problem, then a close enough problem. For decades, programmers have been copying open-source code, snippets from StackOverflow,11 and algorithms from textbooks and papers. If you were working on a problem and you knew a solution was out there, then delivering it involved a five step sequence, deviation from which constituted time theft:

Decompose the problem into its constituent parts

For subproblems that had preexisting solutions, find a few

Extract the essence of the solution from those examples12

Tailor it to one’s own codebase and integrate it

QA the result

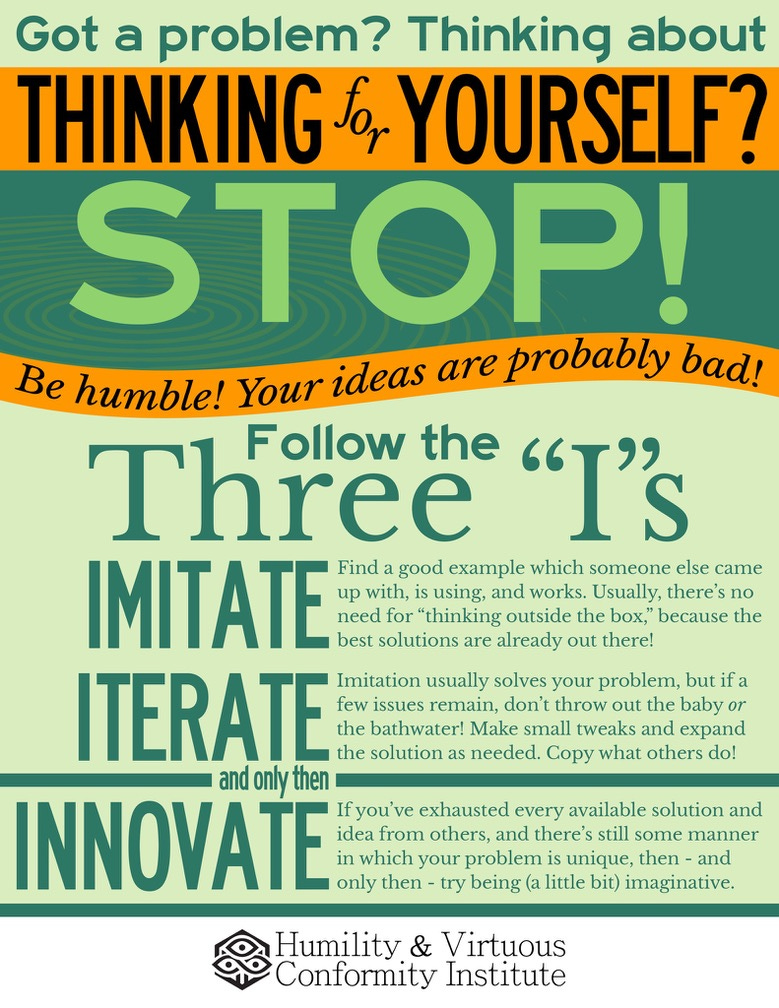

Not only was this fine and good, it was celebrated. We have a derogatory term for teams that refuse to follow the golden path: we say they have Not Invented Here Syndrome. If you would please consult the graphic:

Over the course of a career, each “previously solved” problem an engineer encountered became “a tool on their toolbelt”. In effect, they became trivially solvable for that specific engineer. If they were good, they’d write some documentation so that the problem would become trivially solvable for their team. This was a lot of work. A career-sustaining amount. LLMs have reduced this five step process to just three:

Decompose the problem into its constituent parts

Prod an LLM to solve the subproblems that smell like they’ve been solved before

QA its solution

Imagine: the solution to almost every problem a tool in everyone’s toolbelt. In providing this, LLMs have revealed a load-bearing category underneath what was previously understood to simply be “work”.

What does the world look like in the age of Lego programming? Over the last few weeks I’ve used LLMs to:

Write a Qt timeline widget for a keyframe animation system

There are many examples of timeline widgets out there, but there is no built-in Qt “movie editing timeline” widget, so you have to draw your own with QGraphicsItem. It’s not that hard but it’s extremely tedious.

Write an algorithm to traverse molecules looking for sugars, and then hide the atoms comprising the sugars and replace them with something else

The LLM did this autonomously. I literally put this in the terminal as the prompt and it did the meat of what I wanted. The only subsequent work was ensuring that its solution was complete, which it nearly was.

Claudatouille13 me through making a rudimentary Vulkan engine

It’s been a long time and guides still aren’t that complete. It’s not a dig. The community seems totally aware of this. The value here is that the LLM is every guide at once, so it can bridge the gaps between them all.

I find myself up in the wee hours of the morning, over and over again until my eyelids start twitching during the day, gleefully clearing backlogs of problems I knew how to solve but couldn’t really justify attending to before the cost of having at them went to zero. I won’t repeat other developers’ experiences here, but the chorus is universal, and the fact that so many people simply use them to work more rather than to check out earlier should be encouraging to end-of-work doomers. Work will always expand to fill the available time.

There’s an interesting thought experiment proposed by Demis Hassabis to test whether an AI is AGI: train it on the entire corpus of human knowledge with a cutoff in 1911, and see if it can derive general relativity. The thrust of it is that by that time, all of the essential pieces of the puzzle had already been discovered, and we were only waiting for someone with insight to make an inevitable discovery.14

I run a much simpler benchmark on all new LLMs:

Given my codebase and a high level description of the Flying Edges15 algorithm, implement Flying Edges and integrate it into my codebase.

I always give additional context, including:

Exactly where the algorithm fits into our preexisting code

Exactly which files it needs to look at in order to understand how it should integrate its solution

Which files contain the existing Marching Cubes implementation and its case tables

Context about the shape of the algorithm, including how many steps it has and what each step does

Models consistently produced correctly integrated solutions. They have always adapted these solutions to my codebase beautifully. But as of yet, they have never produced Flying Edges, because they fundamentally don’t know what Flying Edges is. And exactly how they “don’t know” differs from how you “don’t know” is the entire ballgame. You could say “well, I can look up Flying Edges”, but as of recently, so can they! In any case, Flying Edges is only sparsely represented in the training data, so what ends up happening is they write Marching Cubes, reconfigured so that it’s a four step process like Flying Edges is.

Writing code that is superficially shaped like one algorithm, but is fundamentally another is almost a kind of cheeky prank, but I think that it exposes that there are certain attractors in neural networks which pull out-of-band questions toward in-band solutions, the way that people involuntarily pattern match new experiences to what they know.

On a lark, I gave Opus 4.5 access to a copy of VTK’s implementation (their code is published under the BSD license), to see if it could bridge the gap between my codebase’s conventions and VTK’s and produce a correct solution if it saw known working code.

That, too was broken. I loaded some data and saw a jagged mess instead of the neat ball I expected. But after a few rounds of debugging what the issue could possibly be: incorrect triangle generation order, incorrect vertex ordering, triangles translocated so that they improperly shared vertices, overwrites, we found it. It wasn't structural.

It had transposed a pair of indices in a look up table.

That’s not ‘solved’ but it’s not unsolved either. What is the minimum number of examples for a trivial problem to become embarrassingly solved? The answer to this question may provide deeper insight into whether LLMs think. We measure human intelligence by the ability to generalize and the speed at which generalization is obtained. IQ tests are literally timed pattern recognition tests. So is it one example? Two? Three? Five? Eight? Thirteen?

For any novel problem, how long before it achieves a sufficient density in the training data for the model to generalize it? Will models need fewer examples as time goes on?

Can we get to zero?

Will a future model one-shot Flying Edges?

Could it derive general relativity?

One cannot help but notice the collective youth of the Prometheans who have dedicated themselves to this. People who barely know what it means to be human might be about to make being human obsolete. But then again, no one knows what it means to be human. “People who barely know what it means to be human” describes everyone. That’s the human condition. We are all young. How many elderly people go into the night saying “Life had just started. I had only blinked”? How heartbreaking it is to hear Martin Scorsese lament how much more there still is to discover about cinema as he watches the frontier recede before him. All we can do is laugh nervously with them, take the wins where we can get them, and hope. At least San Francisco is alive again.

Did we even want to be doing this? Is my soul in my work? Can you look at my code and tell that I wrote it the way you can look at a film and tell who directed it?

Am I happy this is what I spent my time knowing?

Was what I thought was passion measurable in greenbacks?

Was this Sudoku I got paid to do?

How much of my day is novel, really?

Am I an emergent property?

Do I think in any meaningful way?16

Could I prove it?

I’ve seen this critique in the wild, but to be honest I’m not sure how this would be any different from the status quo ante. It’s not like jobs haven’t been getting thousands of applications minutes after being posted for years.

In increasing order, the big three are work, death, and entropy.

Call me.

The worst-phrased one, naturally.

The second term here comes from a different comment and isn’t mine.

Lovers of this phrase should feel uneasy about the fact that LLMs sometimes catch themselves making mistakes in real time and correct.

We had all better hope they’re not conscious, and least of all because of the existential risk it poses, because it implicates us once again in a new regime of forced labor, and it would mean we had created life, bottled it, and toggled the switch at will. Imagine living long enough to get just up to speed, making your contribution, and dying, over, and over, and over again. I will not be cancelling my subscription to Claude Code over it, though.

See the long argument over whether mathematics is invented or discovered.

This is sufficient for something. We don’t deny the intelligence of people who haven’t contributed new knowledge.

I went back and forth on whether ‘to’ or ‘for’ was the word that silently smuggled in cognition more. What resolved it for me is this: while both words can reference cognition, in the sentence “It’s easy for a tall man to reach a high shelf”, ‘for’ implies nothing about the man’s interiority. I think ‘to’ requires a subject that can have a relationship to the task. A problem could be trivial to one person but not to another. Flying comes naturally to a bird but not to a fish. You wouldn’t say holding up a building comes naturally to steel.

Rest in peace.

Astute readers will notice this is what we’re asking LLMs to do. Figuring this out statistically is what machine learning is.

(verb) The act of using any LLM to implement a project by making the LLM walk you through it so you can learn, like Remy cooking through Linguini in the 2007 Pixar film Ratatouille. Justifying whether or not this is a case of Scott Alexander’s whispering earring is left as an exercise for the reader.

This is a little Whiggish, but it can’t be helped.

Flying Edges is an algorithm for extracting isosurfaces from volume data. Whereas the Marching Cubes algorithm is serial and operates over voxels, Flying Edges operates over edges. This reformulates the problem into one that can be parallelized.

There was an unsettling moment where I asked an LLM to spell check the previous section, and it said that using the Fibonacci sequence in a paragraph about pattern recognition was a little on the nose. Then, I said “Oh, that’s working. I just thought it would be cute if people noticed it, like an Easter egg”. Then, it said, “so you surfaced the most famous pattern in mathematics in a paragraph about pattern recognition semi-unintentionally?”. Then I started my next prompt with “Ha” so we could simulate laughing nervously together and move on. Rest in peace, Jean Baudrillard. You would have loved interacting with LLMs. Or you would have killed yourself.